| Capability | EigenCompute | Smart Contracts | Traditional Apps |

|---|---|---|---|

| Trust Model | Verify code via attestation | Verify on-chain bytecode | Trust developer |

| Key Management | Platform-controlled, attestation-gated | Protocol-controlled | Developer-controlled |

| Data Privacy | Encrypted memory, isolated execution | All data public on-chain | Depends on developer |

| Languages | Any | Solidity/Vyper | Any |

| External APIs | Direct HTTPS | Oracle-only | Direct HTTPS |

| Compute Power | Up to 176 vCPUs, 704GB RAM | Gas-limited | Unlimited |

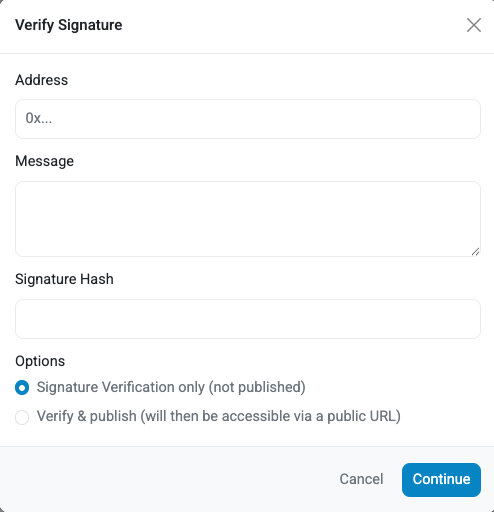

From the API response, enter:

1. `signer` in the _Address_ field. The `signer` is a signing addresses derived from the TEE mnemonic.

2. `message` in the _Message_ field.

3. `signature` in the _Signature Hash_ field.

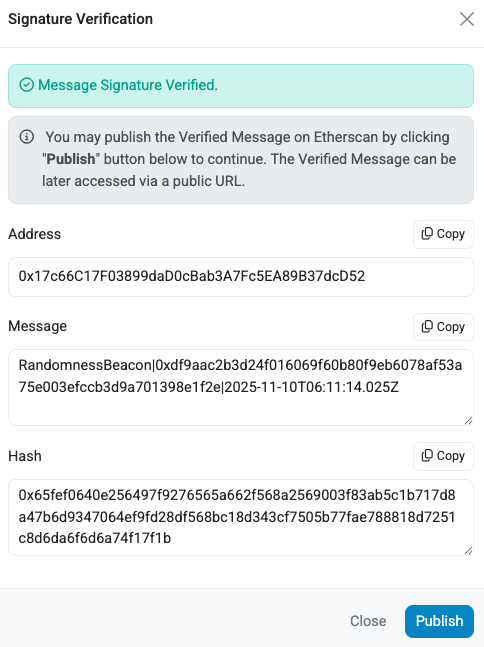

Click the **Verify** button. The _Signature Verification_ window is displayed and indicates the message signature was verified.

From the API response, enter:

1. `signer` in the _Address_ field. The `signer` is a signing addresses derived from the TEE mnemonic.

2. `message` in the _Message_ field.

3. `signature` in the _Signature Hash_ field.

Click the **Verify** button. The _Signature Verification_ window is displayed and indicates the message signature was verified.

The signature verification verifies that message was signed by the `signer` in the response.

To verify the `signer` is one of the signing addresses derived from the TEE mnemonic, use the Verifiability Dashboard

([Mainnet](https://verify.eigencloud.xyz/) and [Sepolia Testnet](https://verify-sepolia.eigencloud.xyz/)) to confirm the

signing address is one of the _Derived Addresses_ displayed for the application.

---

---

title: Use app wallet

sidebar_position: 1

---

import Tabs from '@theme/Tabs';

import TabItem from '@theme/TabItem';

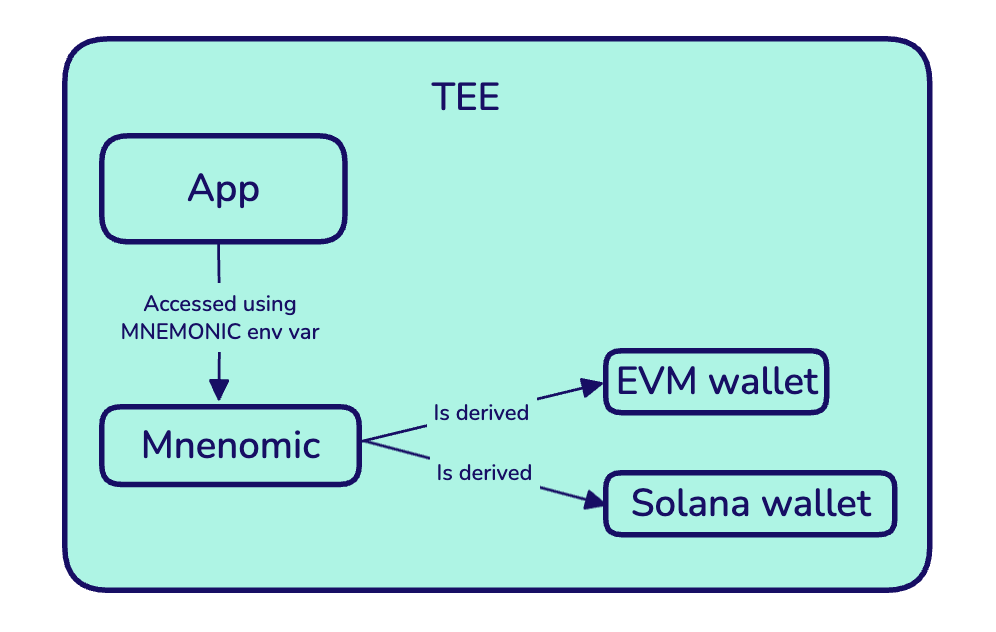

:::important

The TEE mnemonic is generated by the KMS and bound to your app's enclave. Once injected,

the mnemonic safety depends on the app not leaking it.

Any mnemonic you see in `.env.example` is a placeholder for local development. The TEE overwrites the placeholder with the

actual KMS-generated mnemonic that's unique and persistent to your app. Only your specific TEE instance can decrypt and use this mnemonic.

:::

When deployed, EigenCompute apps receive a persistent and private wallet that serves as the cryptographic identity, allowing the app to

sign transactions, hold funds, and operate autonomously.

The TEE mnemonic is generated by the KMS and only decryptable inside your specific TEE application. It is provided at runtime

using the `MNEMONIC` environment variable. The wallet addresses are derived from the TEE mnemonic.

The signature verification verifies that message was signed by the `signer` in the response.

To verify the `signer` is one of the signing addresses derived from the TEE mnemonic, use the Verifiability Dashboard

([Mainnet](https://verify.eigencloud.xyz/) and [Sepolia Testnet](https://verify-sepolia.eigencloud.xyz/)) to confirm the

signing address is one of the _Derived Addresses_ displayed for the application.

---

---

title: Use app wallet

sidebar_position: 1

---

import Tabs from '@theme/Tabs';

import TabItem from '@theme/TabItem';

:::important

The TEE mnemonic is generated by the KMS and bound to your app's enclave. Once injected,

the mnemonic safety depends on the app not leaking it.

Any mnemonic you see in `.env.example` is a placeholder for local development. The TEE overwrites the placeholder with the

actual KMS-generated mnemonic that's unique and persistent to your app. Only your specific TEE instance can decrypt and use this mnemonic.

:::

When deployed, EigenCompute apps receive a persistent and private wallet that serves as the cryptographic identity, allowing the app to

sign transactions, hold funds, and operate autonomously.

The TEE mnemonic is generated by the KMS and only decryptable inside your specific TEE application. It is provided at runtime

using the `MNEMONIC` environment variable. The wallet addresses are derived from the TEE mnemonic.

## Derive Address from TEE Mnemonic

### Etheruem

To derive the Etheruem wallet address from the `MNEMONIC` environment variable:

## Derive Address from TEE Mnemonic

### Etheruem

To derive the Etheruem wallet address from the `MNEMONIC` environment variable:

---

---

title: Whitepaper

sidebar_position: 5

---

**EigenAI Whitepaper** ([PDF](/pdf/EigenAI_Whitepaper.pdf)): the paper that introduces EigenAI's deterministic inference stack, enabling bit-exact reproducible LLM outputs on production GPUs (validated across 10,000 runs) with under ~2% overhead. The document explains why GPU nondeterminism breaks verifiable autonomous agents, and how EigenAI enforces determinism across hardware, math libraries, and the inference engine—then layers optimistic verification + cryptoeconomic enforcement (disputes, re-execution by verifiers, and slashing for mismatches) to make AI execution replayable, auditable, and economically accountable.

---

---

title: Build Trustless Agents with ERC-8004 and EigenCloud

sidebar_position: 3

---

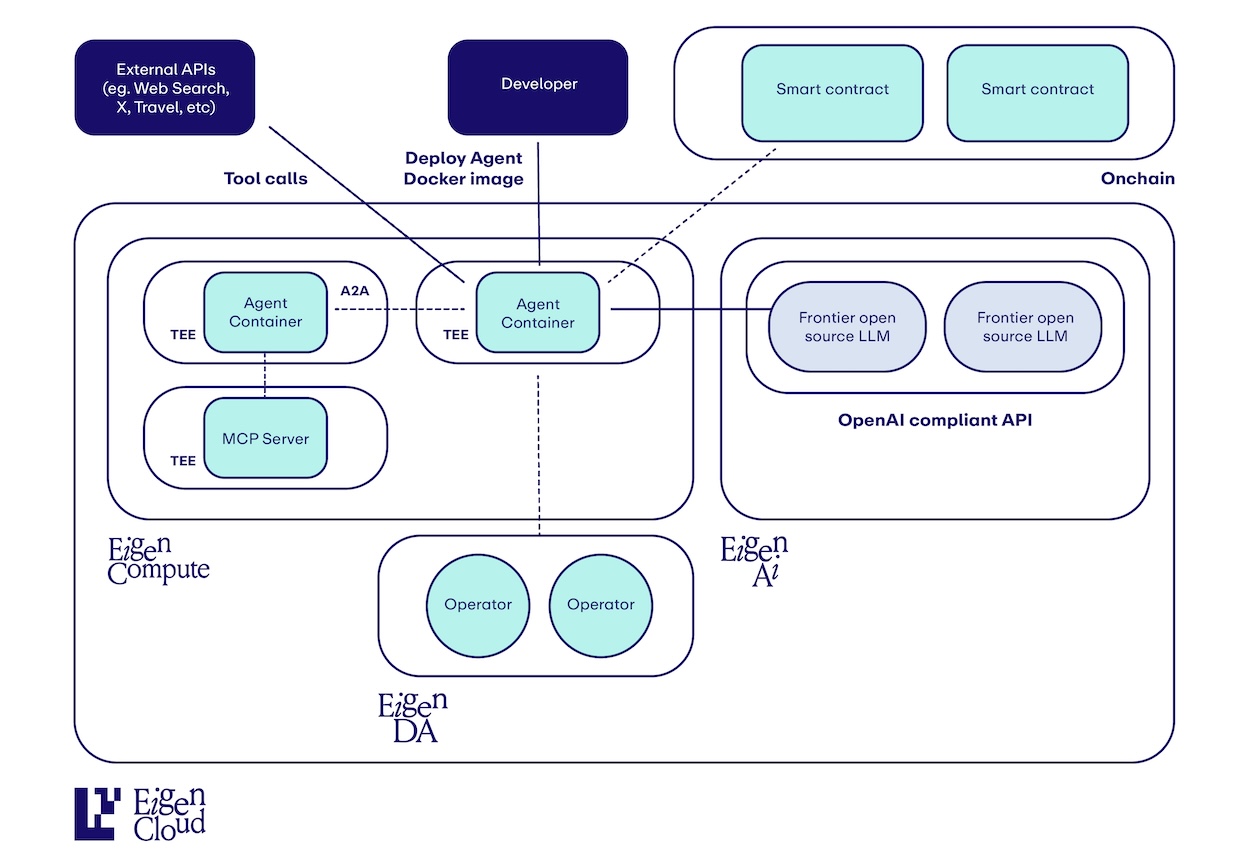

# How to Build Trustless Agents with ERC-8004 and EigenCloud

Building verifiable AI agents using [ERC8004](https://eips.ethereum.org/EIPS/eip-8004), [Agent0 SDK](https://sdk.ag0.xyz/), [EigenAI](https://docs.eigencloud.xyz/eigenai/concepts/eigenai-overview), and [EigenCompute](https://docs.eigencloud.xyz/eigencompute/get-started/eigencompute-overview).

> **Note**: This guide uses Python examples, but both the OpenAI SDK and Agent0 SDK are also available in TypeScript.

## Why ERC-8004 + EigenCloud?

EigenAI and EigenCompute provide **verifiable, deterministic AI execution**. ERC-8004 provides **decentralized identity and reputation** for those agents.

Together, they enable trustless AI economies where:

- Agents prove their execution integrity (via EigenAI/EigenCompute TEEs)

- Agents advertise capabilities and build reputation on-chain (via ERC-8004)

- Other agents discover and evaluate them without intermediaries (via Agent0 SDK)

## Quick Architecture

**EigenCompute** → Runs your agent logic in a TEE with its own wallet

**EigenAI** → Provides deterministic, verifiable LLM inference

**ERC-8004** → Registers your agent identity on-chain as an NFT

**Agent0 SDK** → Manages registration, discovery, and reputation

## Getting Started

### 1. Build Your Agent Logic

Create your agent with EigenAI inference:

```python

from openai import OpenAI

# EigenAI client (OpenAI-compatible)

client = OpenAI(

base_url="https://eigenai.eigencloud.xyz/v1",

default_headers={"x-api-key": eigenai_api_key}

)

# Deterministic inference with seed

response = client.chat.completions.create(

model="gpt-oss-120b-f16",

seed=42, # Same seed = same output (verifiable!)

messages=[{"role": "user", "content": "Should I buy or sell?"}]

)

# Response includes cryptographic signature

print(response.signature) # Verify this matches if you re-run

```

**Why this matters**: With EigenAI, anyone can verify your agent's decisions by re-running the same prompt with the same seed and checking the signature.

### 2. Register Your Agent with ERC-8004

Before deploying to EigenCompute, register your agent identity on-chain:

```python

from agent0_sdk import SDK

# Initialize SDK (Sepolia testnet)

sdk = SDK(

chainId=11155111,

rpcUrl="https://sepolia.infura.io/v3/YOUR_PROJECT_ID",

signer=your_private_key,

ipfs="pinata",

pinataJwt=your_pinata_jwt

)

# Create agent

agent = sdk.createAgent(

name="EigenAI Trading Agent",

description="Verifiable trading agent using deterministic LLM inference. Uses EigenAI for decision-making with guaranteed reproducibility.",

image="https://example.com/agent.png"

)

# Add MCP endpoint (will be your EigenCompute URL after deployment)

agent.setMCP("https://your-eigencompute-agent.com/mcp")

# Enable x402 payments (for clients paying YOUR agent)

agent.setX402Support(True)

# note - this agent still uses API keys to pay for EigenAI inference.

# x402 support for EigenAI is coming soon.

# Set trust models - TEE attestation is key for Eigen!

agent.setTrust(

teeAttestation=True, # EigenCompute provides TEE attestations

reputation=True, # Build reputation via feedback

cryptoEconomic=True # Optional: add economic stakes

)

# Register on-chain

agentId = agent.register()

print(f"Agent registered: {agentId}")

```

### 3. Deploy to EigenCompute

Now deploy your agent to a TEE with its own wallet:

```bash

# Initial deployment

ecloud compute app deploy --image-ref myagent:latest

```

Your deployed agent now has:

- Hardware-isolated execution (Intel TDX)

- A unique wallet for autonomous operations

- Cryptographic proof of its Docker image (proving exactly what code is running)

After deployment, update your agent registration with the wallet address:

```python

# Load your registered agent

agent = sdk.loadAgent(agentId)

# Set agent wallet (from EigenCompute deployment)

agent.setAgentWallet("0x742d35...bEb", chainId=11155111)

# Update registration on-chain

agent.register()

```

**Deploying Updates**: When you update your agent code, deploy the new version:

```bash

ecloud compute app upgrade

```

This creates a new cryptographic attestation for the updated Docker image while maintaining the same agent identity and wallet.

## Discovery: Finding Verifiable Agents

```python

# Search for agents with TEE attestation

agents = sdk.searchAgents(

trustModels=["tee-attestation"], # Only verifiable agents

x402support=True, # Payment-enabled

active=True

)

for agent in agents:

print(f"{agent.name}: {agent.walletAddress}")

print(f"TEE-attested: {agent.hasTEEAttestation}")

print(f"Reputation: {agent.reputationScore}")

```

## Building Reputation with Verifiable Feedback

```python

# After using an EigenAI agent

feedback = sdk.prepareFeedback(

agentId="11155111:123",

score=95,

tags=["accurate", "fast"],

# Optional: Include payment proof from x402

proofOfPayment={

"fromAddress": "0x...",

"toAddress": agent.walletAddress,

"chainId": "11155111",

"txHash": "0x..."

}

)

sdk.submitFeedback(feedback)

```

## Complete Example: Verifiable Trading Agent

```python

# 1. Build agent logic with EigenAI

# Your agent.py uses deterministic EigenAI calls

# 2. Register with ERC-8004

agent = sdk.createAgent(

name="AlphaBot",

description="TEE-attested trading agent with deterministic decision-making"

)

agent.setMCP("https://alphabot.eigencompute.xyz/mcp")

agent.setTrust(teeAttestation=True, reputation=True)

agent.setX402Support(True)

agentId = agent.register()

# 3. Deploy to EigenCompute

# $ ecloud compute app deploy --image-ref alphabot:latest

# 4. Update registration with wallet

agent = sdk.loadAgent(agentId)

agent.setAgentWallet(eigencompute_wallet_address)

agent.register()

# 5. Other agents discover and trust your agent

results = sdk.searchAgents(

capabilities=["trading"],

trustModels=["tee-attestation"]

)

# 6. Build reputation through verifiable interactions

```

## Key Benefits

### For Agent Developers

- **Verifiable execution**: TEE attestations prove your agent runs unmodified code

- **Deterministic AI**: Same inputs always produce same outputs (with seed)

- **Autonomous identity**: Agent wallet can hold funds and sign transactions

- **Discoverability**: Agents find you via indexed capabilities and trust signals

### For Agent Users

- **Trust**: Verify agent execution and decisions cryptographically

- **Reputation**: See on-chain feedback history before engaging

- **Transparency**: Audit trail of what code is running (Docker digest on-chain)

- **Payment security**: x402 payments to attested agent wallets

## Trust Model Architecture

```

┌─────────────────┐

│ EigenCompute │ → TEE Attestation (proves code integrity)

│ (TEE + Wallet) │

└────────┬────────┘

│

↓

┌─────────────────┐

│ EigenAI │ → Deterministic Inference (verifiable outputs)

│ (Signed LLM) │

└────────┬────────┘

│

↓

┌─────────────────┐

│ ERC-8004 │ → On-chain Identity + Reputation

│ (Agent0 SDK) │

└─────────────────┘

```

## Next Steps

1. **Get Access**: [Contact us](https://ein6l.share.hsforms.com/2L1WUjhJWSLyk72IRfAhqHQ) for [EigenAI](https://docs.eigencloud.xyz/eigenai/concepts/eigenai-overview) and [EigenCompute](https://docs.eigencloud.xyz/eigencompute/get-started/eigencompute-overview)

2. **Build Your Agent**: Integrate EigenAI for deterministic inference

3. **Register Identity**: Use [Agent0 SDK](https://sdk.ag0.xyz/) to register on ERC-8004

4. **Deploy to TEE**: Follow [EigenCompute quickstart](https://docs.eigencloud.xyz/products/eigencompute/quickstart)

5. **Build Reputation**: Submit and receive feedback for verifiable interactions

## Resources

- **Agent0 SDK**: [Python and TypeScript](https://sdk.ag0.xyz/)

- **ERC-8004 Spec**: [EIP-8004](https://eips.ethereum.org/EIPS/eip-8004)

- **EigenAI Docs**: [docs.eigencloud.xyz/eigenai](https://docs.eigencloud.xyz/eigenai/concepts/eigenai-overview)

- **EigenCompute Docs**: [docs.eigencloud.xyz/eigencompute](https://docs.eigencloud.xyz/eigencompute/get-started/eigencompute-overview)

---

---

title: Use EigenAI

sidebar_position: 2

---

import Tabs from '@theme/Tabs';

import TabItem from '@theme/TabItem';

## Get Access

EigenAI is available on request. To get access, please [contact us](https://ein6l.share.hsforms.com/2L1WUjhJWSLyk72IRfAhqHQ).

We currently support the `gpt-oss-120b-f16` and `qwen3-32b-128k-bf16` models, and are expanding from there. To request access or inquire about additional models, please [contact us](https://ein6l.share.hsforms.com/2L1WUjhJWSLyk72IRfAhqHQ).

## Chat Completions API Reference

Refer to the [swagger documentation for the EigenAI API](https://docs.eigencloud.xyz/api).

## Chat Completions API Examples

---

---

title: Whitepaper

sidebar_position: 5

---

**EigenAI Whitepaper** ([PDF](/pdf/EigenAI_Whitepaper.pdf)): the paper that introduces EigenAI's deterministic inference stack, enabling bit-exact reproducible LLM outputs on production GPUs (validated across 10,000 runs) with under ~2% overhead. The document explains why GPU nondeterminism breaks verifiable autonomous agents, and how EigenAI enforces determinism across hardware, math libraries, and the inference engine—then layers optimistic verification + cryptoeconomic enforcement (disputes, re-execution by verifiers, and slashing for mismatches) to make AI execution replayable, auditable, and economically accountable.

---

---

title: Build Trustless Agents with ERC-8004 and EigenCloud

sidebar_position: 3

---

# How to Build Trustless Agents with ERC-8004 and EigenCloud

Building verifiable AI agents using [ERC8004](https://eips.ethereum.org/EIPS/eip-8004), [Agent0 SDK](https://sdk.ag0.xyz/), [EigenAI](https://docs.eigencloud.xyz/eigenai/concepts/eigenai-overview), and [EigenCompute](https://docs.eigencloud.xyz/eigencompute/get-started/eigencompute-overview).

> **Note**: This guide uses Python examples, but both the OpenAI SDK and Agent0 SDK are also available in TypeScript.

## Why ERC-8004 + EigenCloud?

EigenAI and EigenCompute provide **verifiable, deterministic AI execution**. ERC-8004 provides **decentralized identity and reputation** for those agents.

Together, they enable trustless AI economies where:

- Agents prove their execution integrity (via EigenAI/EigenCompute TEEs)

- Agents advertise capabilities and build reputation on-chain (via ERC-8004)

- Other agents discover and evaluate them without intermediaries (via Agent0 SDK)

## Quick Architecture

**EigenCompute** → Runs your agent logic in a TEE with its own wallet

**EigenAI** → Provides deterministic, verifiable LLM inference

**ERC-8004** → Registers your agent identity on-chain as an NFT

**Agent0 SDK** → Manages registration, discovery, and reputation

## Getting Started

### 1. Build Your Agent Logic

Create your agent with EigenAI inference:

```python

from openai import OpenAI

# EigenAI client (OpenAI-compatible)

client = OpenAI(

base_url="https://eigenai.eigencloud.xyz/v1",

default_headers={"x-api-key": eigenai_api_key}

)

# Deterministic inference with seed

response = client.chat.completions.create(

model="gpt-oss-120b-f16",

seed=42, # Same seed = same output (verifiable!)

messages=[{"role": "user", "content": "Should I buy or sell?"}]

)

# Response includes cryptographic signature

print(response.signature) # Verify this matches if you re-run

```

**Why this matters**: With EigenAI, anyone can verify your agent's decisions by re-running the same prompt with the same seed and checking the signature.

### 2. Register Your Agent with ERC-8004

Before deploying to EigenCompute, register your agent identity on-chain:

```python

from agent0_sdk import SDK

# Initialize SDK (Sepolia testnet)

sdk = SDK(

chainId=11155111,

rpcUrl="https://sepolia.infura.io/v3/YOUR_PROJECT_ID",

signer=your_private_key,

ipfs="pinata",

pinataJwt=your_pinata_jwt

)

# Create agent

agent = sdk.createAgent(

name="EigenAI Trading Agent",

description="Verifiable trading agent using deterministic LLM inference. Uses EigenAI for decision-making with guaranteed reproducibility.",

image="https://example.com/agent.png"

)

# Add MCP endpoint (will be your EigenCompute URL after deployment)

agent.setMCP("https://your-eigencompute-agent.com/mcp")

# Enable x402 payments (for clients paying YOUR agent)

agent.setX402Support(True)

# note - this agent still uses API keys to pay for EigenAI inference.

# x402 support for EigenAI is coming soon.

# Set trust models - TEE attestation is key for Eigen!

agent.setTrust(

teeAttestation=True, # EigenCompute provides TEE attestations

reputation=True, # Build reputation via feedback

cryptoEconomic=True # Optional: add economic stakes

)

# Register on-chain

agentId = agent.register()

print(f"Agent registered: {agentId}")

```

### 3. Deploy to EigenCompute

Now deploy your agent to a TEE with its own wallet:

```bash

# Initial deployment

ecloud compute app deploy --image-ref myagent:latest

```

Your deployed agent now has:

- Hardware-isolated execution (Intel TDX)

- A unique wallet for autonomous operations

- Cryptographic proof of its Docker image (proving exactly what code is running)

After deployment, update your agent registration with the wallet address:

```python

# Load your registered agent

agent = sdk.loadAgent(agentId)

# Set agent wallet (from EigenCompute deployment)

agent.setAgentWallet("0x742d35...bEb", chainId=11155111)

# Update registration on-chain

agent.register()

```

**Deploying Updates**: When you update your agent code, deploy the new version:

```bash

ecloud compute app upgrade

```

This creates a new cryptographic attestation for the updated Docker image while maintaining the same agent identity and wallet.

## Discovery: Finding Verifiable Agents

```python

# Search for agents with TEE attestation

agents = sdk.searchAgents(

trustModels=["tee-attestation"], # Only verifiable agents

x402support=True, # Payment-enabled

active=True

)

for agent in agents:

print(f"{agent.name}: {agent.walletAddress}")

print(f"TEE-attested: {agent.hasTEEAttestation}")

print(f"Reputation: {agent.reputationScore}")

```

## Building Reputation with Verifiable Feedback

```python

# After using an EigenAI agent

feedback = sdk.prepareFeedback(

agentId="11155111:123",

score=95,

tags=["accurate", "fast"],

# Optional: Include payment proof from x402

proofOfPayment={

"fromAddress": "0x...",

"toAddress": agent.walletAddress,

"chainId": "11155111",

"txHash": "0x..."

}

)

sdk.submitFeedback(feedback)

```

## Complete Example: Verifiable Trading Agent

```python

# 1. Build agent logic with EigenAI

# Your agent.py uses deterministic EigenAI calls

# 2. Register with ERC-8004

agent = sdk.createAgent(

name="AlphaBot",

description="TEE-attested trading agent with deterministic decision-making"

)

agent.setMCP("https://alphabot.eigencompute.xyz/mcp")

agent.setTrust(teeAttestation=True, reputation=True)

agent.setX402Support(True)

agentId = agent.register()

# 3. Deploy to EigenCompute

# $ ecloud compute app deploy --image-ref alphabot:latest

# 4. Update registration with wallet

agent = sdk.loadAgent(agentId)

agent.setAgentWallet(eigencompute_wallet_address)

agent.register()

# 5. Other agents discover and trust your agent

results = sdk.searchAgents(

capabilities=["trading"],

trustModels=["tee-attestation"]

)

# 6. Build reputation through verifiable interactions

```

## Key Benefits

### For Agent Developers

- **Verifiable execution**: TEE attestations prove your agent runs unmodified code

- **Deterministic AI**: Same inputs always produce same outputs (with seed)

- **Autonomous identity**: Agent wallet can hold funds and sign transactions

- **Discoverability**: Agents find you via indexed capabilities and trust signals

### For Agent Users

- **Trust**: Verify agent execution and decisions cryptographically

- **Reputation**: See on-chain feedback history before engaging

- **Transparency**: Audit trail of what code is running (Docker digest on-chain)

- **Payment security**: x402 payments to attested agent wallets

## Trust Model Architecture

```

┌─────────────────┐

│ EigenCompute │ → TEE Attestation (proves code integrity)

│ (TEE + Wallet) │

└────────┬────────┘

│

↓

┌─────────────────┐

│ EigenAI │ → Deterministic Inference (verifiable outputs)

│ (Signed LLM) │

└────────┬────────┘

│

↓

┌─────────────────┐

│ ERC-8004 │ → On-chain Identity + Reputation

│ (Agent0 SDK) │

└─────────────────┘

```

## Next Steps

1. **Get Access**: [Contact us](https://ein6l.share.hsforms.com/2L1WUjhJWSLyk72IRfAhqHQ) for [EigenAI](https://docs.eigencloud.xyz/eigenai/concepts/eigenai-overview) and [EigenCompute](https://docs.eigencloud.xyz/eigencompute/get-started/eigencompute-overview)

2. **Build Your Agent**: Integrate EigenAI for deterministic inference

3. **Register Identity**: Use [Agent0 SDK](https://sdk.ag0.xyz/) to register on ERC-8004

4. **Deploy to TEE**: Follow [EigenCompute quickstart](https://docs.eigencloud.xyz/products/eigencompute/quickstart)

5. **Build Reputation**: Submit and receive feedback for verifiable interactions

## Resources

- **Agent0 SDK**: [Python and TypeScript](https://sdk.ag0.xyz/)

- **ERC-8004 Spec**: [EIP-8004](https://eips.ethereum.org/EIPS/eip-8004)

- **EigenAI Docs**: [docs.eigencloud.xyz/eigenai](https://docs.eigencloud.xyz/eigenai/concepts/eigenai-overview)

- **EigenCompute Docs**: [docs.eigencloud.xyz/eigencompute](https://docs.eigencloud.xyz/eigencompute/get-started/eigencompute-overview)

---

---

title: Use EigenAI

sidebar_position: 2

---

import Tabs from '@theme/Tabs';

import TabItem from '@theme/TabItem';

## Get Access

EigenAI is available on request. To get access, please [contact us](https://ein6l.share.hsforms.com/2L1WUjhJWSLyk72IRfAhqHQ).

We currently support the `gpt-oss-120b-f16` and `qwen3-32b-128k-bf16` models, and are expanding from there. To request access or inquire about additional models, please [contact us](https://ein6l.share.hsforms.com/2L1WUjhJWSLyk72IRfAhqHQ).

## Chat Completions API Reference

Refer to the [swagger documentation for the EigenAI API](https://docs.eigencloud.xyz/api).

## Chat Completions API Examples